Instead of sticking to a lazy term, put some more effort to describe flaws of the code you see, and improvements you could think of.

Be specific on the flaws you see, even at the cost of being more verbose. “I find this function too complicated”. “This class has multiple responsibilities”. “The variable name does not describe its purpose clearly”.

Give a specific suggestion on how to improve the issue, if it not obvious from your description. Thins like “This class has multiple responsibilities. What would you think of breaking it into two parts, one being responsible for serialisation, another for price calculation?” or“I find the name calculate hard to understand. How about calling it getPriceWithoutVAT instead?”

Talk about the future implications of the code you see, if not changed. Things like “I think the class would probably be hard to reuse, because it has many things hardcoded.” or “I’m pretty sure newcomers would find this code hard to comprehend, as it does not follow conventions used across the codebase.”

Ask the person writing the code, what they think about your comments. Say thinks like “what do you think about this?” or “do you think this would make sense?”.

Get Started for FREE

Sign up with Facebook Sign up with X

I don't have a Facebook or a X account

Your new post is loading... Your new post is loading...

Your new post is loading... Your new post is loading...

Current selected tag: 'qualité logicielle'. Clear

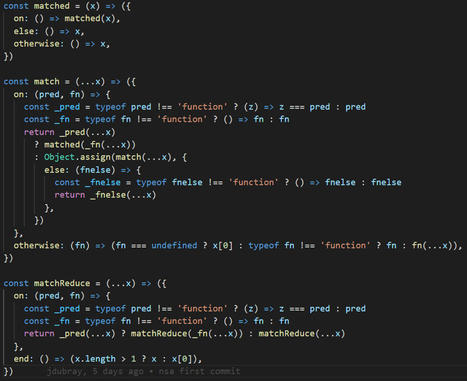

Even Uncle Bob has addressed the subject. In npm alone, countless packages have been released to provide an alternative. People tend to think that the catch with Contact and if-then-else is that the more branches in your code, the more opportunities for untested and unexpected behavior. Each branch requires different use cases to be thoroughly tested, and although some tools, such as Istanbul, provide an indication of how many branches have been performed, in addition to the traditional feature coverage. But branches are part of the nature of programming, there is nothing we can do about it. In this brief statement, I would like to give a new spin on when to use control structures and when to use some functional alternatives.

Mickael Ruau's insight:

Ever since I discovered Dr. Lamport’s Temporal Logic of Action, my programming style has changed to become more explicit about tasks versus mutations of the application mode and more aware of temporal logic. For me, there are three core patterns in programming: object-oriented programming, functional programming, and temporal programming. One developer should master all three and use them accordingly, no pattern wins over the other. We can not write good programs with only one pattern. One of the key concepts in temporal programming is the concept control mode: when the light is off, and if the switch is in the off position, I can trip the light is on Or else check the switch (in bold we have actions as expected in a given control mode). These are extremely familiar concepts we use every day, but for some reason we rarely come across control mode in the code we write. My view of this is that the situation arose due to the lack of alternative constructions with a good enough developer experience, and partly due to the ease of use of the switch and if-so-otherwise, over the stringency required to use temporal programming constructions, but that discussion is for another article. I would like to focus here on something that is much simpler and more practical. First, we must absolutely stop using these control structures for (complex) tasks, it leads to some pretty ugly code that is hard to read and debug. How many times have you written such a code? plain on, tell me in the comments … How about a functional alternative?

How do you define good quality software? How do you develop, measure, and ensure its quality? Read this post to learn the answer to these questions and more.

Mickael Ruau's insight:

Which Code Quality Metrics to UseUltimately, software engineers together with management need to take care of the customer's needs and want to leave no quality errors in the measures that the client cares about. Moreover, various measurements make a difference to the board, the group of engineers, and the client. The engineers need to follow certain matrices because they discover them helpful and ought to disclose them to others. Administration necessities require the calculation of certain parameters. No doubt, you ought to have a set of the most viable Code Quality Metrics for your specific programming task. Mostly, these most common metrics help quantify the issues: 1.Defect MetricsFlaws within your project are a rich wellspring of data to evaluate or make better the practical, primary, and interaction parts across the life cycle of your project. How many defects are found in the code and what is the density of them are what gives an insight into the problem. The density of the defects can be defined as a proportion of deformities found in the programming during a characterized time of advancement partitioned in terms of module size. The discovery rate of defects can be explained as a tally of various imperfections that are found over the long run. Defect metrics states:

2. Complexity MetricsThe previously discussed Cyclomatic complexity and Halstead complexity provide an insight into the checks like maintainability. They elevate quality by reducing complexity. Cyclomatic metrics identify the presence and number of occurrences of individual lines across the original code. Whereas Halstead complexity calculations provide data on Effort, Vocabulary, volume, Length, and Difficulty of the program.

Documentez le code et l’architecture La documentation est parfois laissée de côté au moment du développement, par manque de temps ou de visibilité sur l'ensemble du projet. Elle est pourtant cruciale pour la maintenabilité de votre projet : elle permet de comprendre globalement le fonctionnement du code, et de savoir quelles parties du code sont affectées par une modification.

Mickael Ruau's insight:

ITAF, 4th Edition | Digital | English 0Non-member Pricing DescriptionGet the guidance and techniques that will lend consistency and effectiveness to your audits. The new 4th edition of ITAF outlines standards and best practices aligned with the sequence of the audit process (risk assessment, planning and field work) to guide you in assessing the operational effectiveness of an enterprise and in ensuring compliance. ITAF can serve as your reference for mandatory standards and recommended best practices to follow during IT audit and assurance engagements.

Audit de code - WikipédiaEn programmation informatique, l' audit de code est une pratique consistant à parcourir le code source d'un logiciel afin de s'assurer du respect de règles précises. L'audit peut avoir un but légal (s'assurer que les licences des différentes parties d'un programme autorisent bien à le distribuer tel quel, ou voir l'audit interne de ReactOS), ou s'assurer de la sécurité du logiciel en testant sa vulnérabilité, ou bien chercher des bugs.

Un audit de code source est une analyse poussée du code source d'une application, afin de déterminer si la manière dont elle est développée répond aux standards désirés. Cet audit peut être réalisé de manière automatisée et/ou manuelle, afin de découler sur des actions correctives et un plan progrès.

Mickael Ruau's insight:

Quelle est la composition de l'audit de code source fait par un humain ?On s'attachera à regarder les points suivants en priorité :

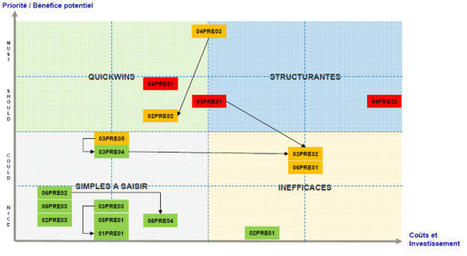

Cette liste n'est bien sûr pas exhaustive, mais s'apparente comme le reflet du travail que seul un humain est en mesure de réaliser. Chez AXOPEN, nous sommes des artisans du code et de la beauté de celui-ci. N'oubliez pas que si un ordinateur était capable d'attester de la qualité d'un code source, c'est qu'il serait en mesure de l'écrire ! Entre nous, ça n'est pas prêt d'arriver :) L'audit doit aussi se concentrer sur les points positifs du code source de l'application. Aussi, il doit mettre en lumière les forces et les faiblesses et ne doit en aucun cas chercher des coupables et des personnes à blâmer. Évidemment, une application est toujours la résultante de nombreuses années de développement, de contraintes projets, de changements d'équipes... Il faut donc garder tout cela en tête afin de pas faire un audit "à charge". L'idée principale est toujours de dégager des axes d'analyse et de fournir des clés à la prise de décision pour le responsable de l'application. Ainsi, chaque fois qu'un point positif ou négatif est détecté, il est rapporté dans l'audit de code sous forme de tableau. On utilise également radar qualitatif permet en un coup d’œil de se rendre compte des forces et des faiblesses d’une application, et de voir rapidement les points d’amélioration. La conclusion d'un audit de code sourceIl est important d'avoir une vision la plus complète possible et objective des choses. En effet, c'est un vrai état des lieux que nous cherchons à réaliser ici. Une fois l'analyse du code source effectué, il est primordial de travailler à une matrice d'actions valorisées. Cette projection permet aux décideurs d'avoir des éléments visuels clairs pour se positionner sur l'avenir de l'application et son code source. Puis, une matrice priorité/bénéfice VS coût/investissement est souvent une manière efficace de présenter les choses. L'audit doit fournir les clés d'une remise à niveau du code source. Pour chacun des points analysés, le coût de l'investissement est important car il permet de mesurer l'effort d'un retour à l'état de l'art.

Sonar s'appuie sur 7 types de métriques différentes qui sont présentées grâce à l'image suivante :

Mob programming can support teams in changing old habits into new effective habits for creating products in an agile way. Collectively-developed habits are hard to forget when you have other people around. Mob programming forces individuals to put new habits into practice regularly, making them easier to adopt. Teams are intolerant of repetition, and are always looking for better ways of doing their work.

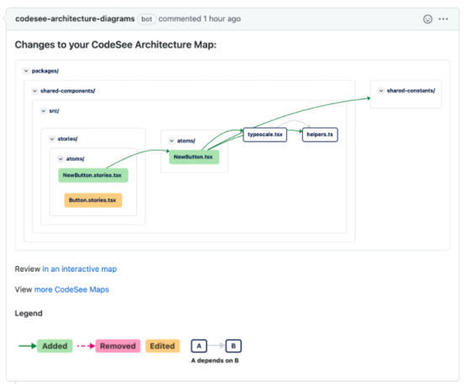

Nombreux sont les témoignages de développeurs qui rapportent que travailler sur d’anciens projets avec une base de code large s’avère généralement cauchemardesque dans la mesure où il faut d’abord comprendre le fonctionnement du code écrit par des tiers, mais également être capable de comprendre les bogues qui surviennent et appliquer les solutions idoines. Ce même sentiment est partagé par de nombreux développeurs qui travaillent sur de nouveaux projets dont la base de code est très étendue. Face aux difficultés rencontrées par les développeurs qui doivent à chaque fois se représenter une carte mentale de tout le code sur lequel ils travaillent pour ne pas être perdus dans ses méandres, CodeSee tente d’apporter une solution avec Review Maps, un outil intégré à sa plateforme de production et conçu pour donner en temps réel une carte détaillée de tout le code du projet afin que les développeurs puissent se concentrer sur les aspects techniques du code et non sur sa compréhension générale.

Justin Gottschlich, scientifique principal et directeur/fondateur de Machine Programming Research à Intel Labs a annoncé le 20 octobre que ControlFlag, l'outil de débogage automatisé d'Intel, est désormais open source.

Mickael Ruau's insight:

Il détecte automatiquement les anomalies dans le code et épargne des heures de travail fastidieux

Points Clés

Mickael Ruau's insight:

Lorsque nous écrivons des tests automatisés, nous pouvons rencontrer des problèmes. Les tests ne passeront pas, ou nous passerons beaucoup de temps à faire l'effort. « Ce code n'est pas testable », dirions-nous. Surtout, ce n'est pas vrai. Le code est toujours testable, mais le coût peut être élevé et l'effort épuisant. La bonne nouvelle est que nous pouvons modifier le code pour qu'il soit hautement testable, en identifiant les anti-patterns et en les corrigeant. La meilleure nouvelle est que nous, les développeurs, pouvons faire en sorte que le code corresponde aux exigences du test, en discutant avec les testeurs, qui le testent réellement. Les testeurs peuvent-ils vraiment affecter la façon dont le code est écrit ?Cela dépend vraiment de la relation entre les testeurs et les développeurs. Dans une équipe agile et cohésive, il y a une ouverture d'esprit. Mais dans de nombreux cas, les testeurs obtiennent leur code « prêt à tester », des semaines ou des mois après que les développeurs aient fini de programmer. À ce stade, demander aux développeurs de "remonter le temps", de laisser ce qu'ils font et de changer ce qu'ils considèrent comme du "code déjà fonctionnel" ne semble pas très agréable. Mais il existe d'autres problèmes qui rendent les développeurs moins attentifs aux besoins de leurs testeurs. Premièrement, ils croient (à cause de ce que les organisations leur enseignent) que lorsqu'ils poussent leur code, cela devient le travail de quelqu'un d'autre. Ils ne sont pas conscients des efforts que les testeurs doivent déployer pour effectuer les tests. En fait, plusieurs fois, ils ne sont même pas au courant des plans de test, des ressources et parfois même des résultats (en dehors des bugs). Donc la distance dans le temps, les connaissances, la réflexion - tout cela rend la discussion entre développeurs et testeurs peu efficace - notamment en termes de testabilité. Arriver en retard avec les demandes est trop tardif. Des modèles de code qui conduisent à une meilleure testabilitéIl existe de nombreux modèles de code et anti-patterns que nous savons être bons (et mauvais) pour les développeurs. Habituellement, nous les considérons en termes de maintenabilité. Mais ils ont également un impact sur la testabilité. |

Dans cet article, il sera démontré comment simplifier le code applicatif en exploitant les possibilités du SGBD grâce aux contraintes.

This is my summary of the The Pragmatic Programmer, by Andrew Hunt and David Thomas. I use it while learning and as quick reference. It is not intended to be an standalone substitution of the book so if you really want to learn the concepts here presented, buy and read the book and use this repository as a reference and guide.

Mickael Ruau's insight:

How to Improve Code Quality?What is an efficient way to ensure that the code is working? One method is to make sure your developers are up to date on what's happening in the field, so they can provide input on ways to solve bugs. These software quality assurance best practices ensure consistency in maintaining better code throughout the project. Another method is of improving code quality is to ensure that your team members understand the nature of the problems they are attempting to solve. Finally, make sure your team members understand how to use the right tools and techniques to analyze code quality metrics and other tools to help them improve the quality of the code they write. Moreover, make sure to include the following steps in your code improvement plan: Routine Manual or Automated Code ReviewsWhile manual reviews are recommended for relatively simple projects, computerized devices can be beneficial in tackling more complex projects with a heavier debt burden. One approach developers often don't consider when they're building a web application is whether the complexity of the resulting application is reflected in the complexity of the coding base.

Audit informatique - WikipédiaL' audit informatique (en anglais Information Technology Audit ou IT Audit) a pour objectif d'identifier et d'évaluer les risques (opérationnels, financiers, de réputation notamment) associés aux activités informatiques d'une entreprise ou d'une administration.

Mickael Ruau's insight:

Sommaire

Coincé avec un code cassé? Impossible d'identifier la raison du bogue? Il est temps d'analyser votre code pour les problèmes causés! Logiciels et applications Web

Il y a beaucoup d’outils open source qui peuvent aider à identifier les mauvaises pratiques de codage.

The untested code gap kills productivity and predictability. We don’t choose where the bugs go making them easy to find; they choose their hiding places, and have no mercy on us. They flare up and put teams into fire fighting mode.

We should write tests to enable developers to move fast with confidence. Code is always evolving, so question everything, collect experience, and judge for yourself.

Mickael Ruau's insight:

Code coverage has nothing to do with code quality, which has been proven statistically Kent Beck (Author of the book TDD By Example) answered the question of how many unit tests a system should have:

Martin Fowler addressed whether a system can have too many tests, saying,

That's not to say coverage doesn't have value. As Martin Fowler points out, it is a good way to identify untested code.

"One difference between a smart programmer and a professional programmer is that the professional understands that clarity is king. Professionals use their powers for good and write code that others can understand." - Clean Code: A Handbook of Agile Software Craftsmanship by Robert C Martin.

Google s’invite aujourd’hui dans le débat et montre qu’en réalité les commentaires peuvent très souvent être évités. « En lisant un code, souvent, il n'y a rien de plus utile qu'un commentaire bien placé. Cependant, les commentaires ne sont pas toujours bons. Parfois, le besoin d'un commentaire peut être un signe que le code devrait être refactorisé. Utilisez un commentaire lorsqu'il est impossible de faire en sorte que votre code s'explique par lui-même », expliquent Dori Reuveni et Kevin Bourrillion sur Google Testing Blog. |